Question:

As shown in the circuit below, a constant voltage source is connected to two ideal resistors.

The voltage drop across a resistor is measured using two different voltmeters V1 and V2 at five different time instances and the following values are recorded from V1 and V2.

As shown in the circuit below, a constant voltage source is connected to two ideal resistors.

The voltage drop across a resistor is measured using two different voltmeters V1 and V2 at five different time instances and the following values are recorded from V1 and V2.

Show Hint

Precision refers to the consistency of measurements, while accuracy refers to how close a measurement is to the true value.

Updated On: Dec 24, 2025

- V1 is less accurate, V2 is more precise

- V1 is more accurate, V2 is more precise

- V1 is less accurate, V2 is less precise

- V1 is more accurate, V2 is less precise

Show Solution

Verified By Collegedunia

The Correct Option is B

Solution and Explanation

Understanding Accuracy and Precision:

- Accuracy refers to how close a measurement is to the true or accepted value. - Precision refers to how consistently repeated measurements yield the same result, regardless of whether the result is close to the true value. Analyzing the Data:

We are given readings from two voltmeters, V1 and V2, at five different time instances. \[ \text{Readings from V1: } 2.479, 2.483, 2.495, 2.508, 2.511 \] \[ \text{Readings from V2: } 2.465, 2.468, 2.470, 2.472, 2.475 \] Precision:

- For V1, the readings vary more widely between time instances: the difference between the highest and lowest values is \( 2.511 - 2.479 = 0.032 \). - For V2, the readings are more tightly grouped: the difference between the highest and lowest values is \( 2.475 - 2.465 = 0.010 \). Since V2 has a smaller range of readings, it is more precise than V1.

Accuracy:

- The values from V1 are generally higher than those from V2. Since we don't know the exact true value, we assume that the device with readings closer to each other is more accurate. The higher variability in V1's readings suggests that V1 is less accurate, and V2 is more accurate. Thus, the correct statement is Option (B): V1 is more accurate, V2 is more precise.

- Accuracy refers to how close a measurement is to the true or accepted value. - Precision refers to how consistently repeated measurements yield the same result, regardless of whether the result is close to the true value. Analyzing the Data:

We are given readings from two voltmeters, V1 and V2, at five different time instances. \[ \text{Readings from V1: } 2.479, 2.483, 2.495, 2.508, 2.511 \] \[ \text{Readings from V2: } 2.465, 2.468, 2.470, 2.472, 2.475 \] Precision:

- For V1, the readings vary more widely between time instances: the difference between the highest and lowest values is \( 2.511 - 2.479 = 0.032 \). - For V2, the readings are more tightly grouped: the difference between the highest and lowest values is \( 2.475 - 2.465 = 0.010 \). Since V2 has a smaller range of readings, it is more precise than V1.

Accuracy:

- The values from V1 are generally higher than those from V2. Since we don't know the exact true value, we assume that the device with readings closer to each other is more accurate. The higher variability in V1's readings suggests that V1 is less accurate, and V2 is more accurate. Thus, the correct statement is Option (B): V1 is more accurate, V2 is more precise.

Was this answer helpful?

0

0

Top GATE BM Measurements and Control Systems Questions

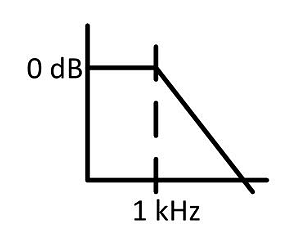

- The Bode plot of a 2nd order low pass filter is shown in the figure below. What is the frequency at which the attenuation is 80 dB ?

- For the balanced Owen-bridge circuit shown in the figure, the values of $L_x$ and $R_x$ are:

- The block diagrams of an ideal system and a real system with their impulse responses are shown below. An auxiliary path is added to the delayed impulse response in the real system. For a unit impulse input ($x(t)=\delta(t)$) to both systems, gain $\beta$ is chosen such that $y(4T)$ is the same for both systems. The value of $\beta$ is _________.

- Independent voltage measurements \((\mu \pm \sigma)\) of three sensors where \(\mu\) and \(\sigma\) are the mean and standard deviation of the measurements, respectively are as follows: \(v_1 = 4.52 \pm 0.02 \, \text{V}, \, v_2 = 4.21 \pm 0.20 \, \text{V}, \, v_3 = 3.96 \pm 0.15 \, \text{V}\). The measurement uncertainty in \(v_1 + v_2 + v_3\) is ________ V (rounded off to two decimal places).

- A moving coil voltmeter has an internal resistance of 50 \(\Omega\). The scale of the meter is divided into 100 equal divisions. When a potential of 1 V is applied to terminals of the voltmeter, a deflection of 100 divisions is obtained. However, it is desired that when a potential of 500 V is applied to the terminals, a deflection of 100 divisions should be obtained. The value of resistance that needs to be connected in series to achieve this is ________ \(\Omega\).

View More Questions

Top GATE BM Questions

- What is the value of the following complex line integral counter-clockwise ?

\(\oint_{|z|=3}\frac{8}{z(z-2)(z-4)}dz\) - To solve the equation x = 2 cos x using Newton-Raphson's method, which one of the following iterations should be used ?

- During the repolarization phase of a neuron, the cell is brought back to the resting potential by the action of a Sodium-Potassium pump. Which one of the following statements is TRUE for the active transport of Na+ and K+ ions through the cell membrane ?

- The cardiac rhythm in a healthy human heart originates from ______.

- Which one of the following events is NOT typically encountered in diagnostic X-ray projection radiography ?

View More Questions